About a decade ago, when the technique known as LCN typing was developed, it referred to the analysis of samples containing less than 100 pg of DNA (Gill P. et al., 2000; Gill P., 2001; Budowle B. et al., 2009). More recently, LCN has been defined as a template value of less than 200 pg, a value largely associated with the quantity of DNA described by different authors (Caddy B., Taylor D.R., Limcare A.M., 2009) as the stochastic threshold for conventional STR typing (Moretti T.R. et al., 2001 (a); Moretti et al., 2001 (b)).

These DNA threshold quantities are based on an amount of template DNA which shows an exaggerated peak height imbalance, drop-out, and an increase in laboratory contamination (Gill P. et al., 2006).

Numerous validation studies have been conducted on commercial kits for DNA typing, and the conditions in which these kits produce reliable results have been clearly defined (Micka K.A. et al., 1999; Cotton E. A. et al., 2000; Krenke B.E. et al., 2002; Holt C.L. et al., 2002; Collins P.J. et al., 2004; Mulero J.J. et al., 2008). The optimal quantities of template are thus well-specified, and the range usually varies between 0.5-2 ng of template DNA (1 ng is usually considered the optimal quantity by most commercial kits).

In what follows, we report the scientific contributions from preeminent scientists on the subject of Low Copy Number, as reported in the international literature.

The tragic terrorist attack in Omagh, Northern Ireland, in 1998 (the bombing of a market, with a death toll of 29 victims and 200 injured) raised the question of the validity of LCN typing and its use in legal cases for the first time. The alleged perpetrator of the attack was initially arrested on the basis of LCN typing results, but later released due to the alleged lack of adequate security measures in the collection, transportation and handling of the seized items, as well as [the absence of] adequate laboratory precautions for LCN typing (The Queen v Sean Hoey. Neutral Citation Number [2007] NICC 49).

The criticisms raised by this judicial case led to the creation of a Commission to review LCN typing technologies: this Commission went on to state that LCN typing, as practiced specifically in the United Kingdom, is a “robust” method and “fit for purpose” (Caddy B., Taylor D.R., Lincare A.M., 2009 – known also as the “Caddy Report”). However, at the same time it offered a series of useful recommendations to improve the reliability of the methodology and emphasizing, amongst other things, the following problems associated with LCN typing:

- greater potential risk of error in comparison with conventional STR typing protocols;

- errors of interpretation due to allele drop-in, allele drop-out, peak height imbalance and large stutter peaks;

- the need for a robust and reliable quantification method in order to determine the amount of DNA available for analysis;

- LCN profiles are not generally reproducible and, due to the potential for error, the probative value of the results may not be evaluated correctly;

- the interpretation of profiles derived from mixtures using LCN typing is problematic, and at the moment there are no interpretation guidelines based on reliable validation studies;

- due to the sensitivity of the method and the types of samples analyzed (for example, “touch” samples), the LCN profile may not be relevant to the specific case;

- the evidence cannot be used for exculpatory purposes;

- instructions about the proper collection of items and protocols regarding their handling have not been clearly established or communicated;

- reagents, consumables and laboratory instrumentation can contain low levels of extraneous DNA which may complicate the interpretation of LCN typing results.

1. Methods of LCN Typing

There are a number of alternative ways to increase the sensitivity of the analysis to enable LCN typing. These include: increasing PCR cycle number (Gill P. et al., 2000; Whitaker J.P. et al., 2001; Gill P., 2001; Kloosterman A.D. et al., 2003; Budowle B. et al., 2009); Nested PCR (Strom C.M., Rechitsky S., 1998); reducing the volume of the PCR (Gaines M.L. et al., 2002; Leclair B. et al., 2003; Budowle B. et al., 2009); Whole Genome Amplification prior to the PCR (Hanson E.K., Ballantyne J., 2005); strengthening the fluorescent dye signal; use of higher purity formamide in sample preparation for capillary electrophoresis (Budowle B. et al., 2009); post-PCR clean-up to remove ions which compete with DNA during electrokinetic injection (Smith P.J., Ballantyne J., 2007; Foster L. et al., 2008; Budowle B. et al., 2009); increasing injection time (Budowle B. et al., 2009).

Due to the important limitations in typing samples containing low concentrations of DNA, many scientists urge caution in the practice and interpretation of LCN (Gill P. et al., 2000; Gill P., 2001; Kloosterman A.D. et al., 2003; Budowle B. et al., 2009).

Budowle B. et al. (2009) call for caution, and suggest the use of LCN exclusively for identifying missing persons (including the victims of mass disasters) and for research purposes. On the other hand, the aforementioned authors advise against the use of current LCN methods in criminal proceedings, since current methods, technologies and recommendations still do not allow the problems which characterize LCN typing to be overcome.

In particular, since by definition LCN typing cannot provide reproducible results, and therefore the same result may not be expected if the same sample is analyzed twice, the method cannot be considered robust according to conventional standards (Budowle B. et al., 2009).

2. Laboratory Procedures

All the practices designed to minimize laboratory contamination are recognized as important and effective: for example, the use of pressurized facilities, suitable laboratory clothing, the analysis of one sample at a time, the use of instruments and materials free of DNA, as well as decontamination practices (exposure to UV rays and/or ethylene oxide) (Shaw K. et al., 2008). Unfortunately, these security measures have still not been officially introduced into the protocols for the collection and handling of items (Caddy B. et al., 2009: The Queen v Sean Hoey. Neutral Citation Number [2007] NICC 49); in fact, this signals the urgent necessity for specific training in sampling procedures for the first rescuers and investigators on the crime scene.

3. Problems associated with low template quantities

The problems which arise from the analysis of sub-optimal quantities of template DNA in a PCR are numerous, and these problems become even more apparent when the template quantity is reduced. Moreover, the interpretation of mixtures has yet to be well-defined.

The fundamental issues of the low template quantity problem are the following: stochastic effects, the detection threshold, profile interpretation, allele drop-out and heterozygous peak imbalance, stutter, contamination, replicate analysis, appropriate controls, and limitations of use.

3.1 Stochastic effects: Due to the kinetics of the PCR process, a low quantity of starting template will be subject to stochastic effects. In fact, during the first cycles, the primer may not bind in the same way for each allele at a given locus, and therefore a significant imbalance between allelic products may be noted or, in some cases, the total loss of one or both alleles. In other words, an LCN DNA template in a PCR may show stochastic amplification phenomena, visible both as a substantial imbalance of two alleles at a given heterozygous locus, and as allelic drop-out or an increase in stutter (Gill P. et al., 2000; Whitaker J.P. et al., 2001; Kloosterman A.D. et al., 2003; Smith P.J., Ballantyne J., 2007; Forster L. et al., 2008; Budowle B. et al., 2009).

3.2 Detection threshold: Usually, it is recommended that quantities of DNA able to reduce stochastic effects to manageable levels are used for the PCR. However, since differences in the quantification of the template and possible inaccuracies in the pipetted volume may influence the amount of DNA actually present in the PCR, a stochastic interpretation threshold must be used for STR typing results (Minimum Interpretation Threshold “MIT”, Budowle B. et al., 2009).

A minimum peak height (or area), established by internal validation studies at the individual laboratory, therefore serves as stochastic control. The peaks which appear below this threshold must not be interpreted or are interpreted with extreme caution and for very limited purposes. With LCN typing, it needs to be emphasized that peak height is substantially increased due to additional PCR cycles, or to post-PCR clean-up procedures. Therefore, since the LCN situation refers to the interpretation of results which would ordinarily be below the stochastic interpretation threshold, minimum peak height criteria such as that used for standard STR typing (with samples containing from 250 pg to 1 ng of DNA) can not exist. Currently therefore, there is no valid method to establish a threshold for LCN typing, and this will continue to be one of the major weak points of the application.

3.3 Profile Interpretation: The two factors which influence the robustness of LCN typing are stochastic effects and the sensitivity of detection. The protocols proposed for the interpretation of profiles generated by LCN therefore consider these characteristic elements (Gill P. et al., 2000). However, while these interpretation guidelines are based on studies of single source profiles using near optimal samples, the poor quality which often characterizes samples of forensic interest and mixtures increases the problems of interpreting LCN data.

To date, well-developed guidelines for LCN interpretation of mixtures have still not been described. Since many touch samples are mixtures (Gill P., 2001; Caddy B. et al., 2009), this lack in validation studies and interpretation guidelines must be considered a serious deficiency.

3.4 Allele Drop-out: Allele drop-out is the phenomenon associated with LCN typing which is simplest to describe. For example, if an allele 15 is observed in the LCN evidence, then on the basis of that evidence any individual [with] a homozygote for allele 15 or rather heterozygote 15, X (where X could be any allele) cannot be excluded as a potential contributor to that sample. In order to support the evidence, the ‘2p rule’ can be used for any individual allele at a particular locus (Budowle B. et al., 1991) and this calculation will be of a conservative type. The observation of 2 alleles at a locus may be considered to represent a heterozygous profile and therefore suggests that allele drop-out did not occur. The Likelihood Ratio for a single source sample can be calculated using 1/2fa for the homozygotes and 1/2fafb for the heterozygotes, where fa is the frequency of allele a and fb the frequency of allele b (Gill P. et al., 2000). In addition, Gill et al. (2000) recommend modifying the calculation by considering the probability of allele drop-out (p(D)). The p(D) is based on experimental observation. However, it is difficult to justify p(D) based exclusively on experimental studies using optimal samples and applying this calculation to any individual real case. Drop-out is, in fact, related both to the quantity and the quality of the sample under examination. These parameters are often undefined in LCN samples and are specific to each individual sample. Allele drop-out cannot be identified as such based only on controlled validation studies conducted in the laboratory, and therefore further research is needed before the values of p(D) can be provided.

3.5 Stutter: During the generation of LCN STR profiles, the percentage value of stutter is variable, and absolutely not informative in that a stutter peak may exceed the height or area of the associated allele peak (Gill P. et al., 2000, Gill P., 2001). Although some researchers (Gill P. et al., 2000) have tried to define the probability of stutter through statistical calculations, the probability and percentage value of stutter with respect to the true allele cannot be predicted. In fact, it is possible that a stutter peak may show twice in repeated analyses and therefore be interpreted as a true allele. The probability of detecting stutter twice in repeated analyses has not yet been studied in depth, and we also await recommendations on the interpretation of stutter in mixed samples.

3.6 Contamination: in its recommendations for the interpretation of mixtures, the DNA Commission of the ISFG gives the following definition of contamination: “DNA introduced after the crime and originating from a source not related to the crime scene: investigators, laboratory technicians, laboratory instrumentation” (Howitt T., 2003; Gill P., Kirkham A., 2004).

This is a very restricted definition of the source of contamination; in fact, low-level contaminant DNA may derive from reagents and other laboratory consumables, from laboratory staff, and from cross-contamination from sample to sample.

Many LCN samples are ‘touch samples’, and therefore low levels of DNA originating from environmental contamination at the crime scene may, for example, be identified on the item . In addition, contamination can happen during the collection and handling of samples. Therefore, the appearance of allele drop-in can be both intrinsic to the samples and induced during collection at the crime scene. Predicting the probability of drop-in based exclusively on experimental data may thus not be useful in simulating the circumstances in which drop-in may have occurred. A further difficulty, particularly apparent in mixtures, lies in determining which allele constitutes a drop-in. In fact, these difficulties tend to create bias in deciding whether or not there is support for contamination.

Statements about a profile obtained from the sample under examination regarding the determination as to which is a true allele and which a drop-in, must necessarily be made without knowledge of the suspect’s profile; only in such a way, in fact, can a qualitatively unimpeachable and unbiased approach to the interpretation of the profile from the sample be guaranteed. Interpreting a profile from a sample with the suspect’s reference profile to hand is indicative of a biased approach, and is in total contrast to the absolutely objective nature of forensic science.

Due to the limitations of LCN typing, extreme care needs to be taken not to overstep quality interpretation practices, in order to ensure that interpretive error is minimized.

As well as the risks caused by handling of the samples, the analysis of such a small amount of starting material inevitably means an exaggeration of stochastic phenomena in those samples. Increasing the sensitivity of the system will also increase the risk of contamination in the samples being examined (Budowle B. et al., 2009).

Gill P. and Buckleton J.S. (2010) agree with Budowle B. et al. (2009) on the issues raised about the contamination problem. In addition, regarding the criticism of the former made by the latter, with respect to their restricted definition of drop-in to laboratory processing, Gill emphasizes that their definitions and analyses are not only limited to the phases of laboratory processing, but include handover phases from sources at the crime scene, at the Evidence Collection Unit and at the DNA Unit (Gill P., Kirkham A., 2004).

Figure 1: generalized time sequence illustrating the possible methods by which a DNA profile may be propagated.

Regarding the problem of contamination, the same authors emphasize the substantial difference between the phenomenon of drop-in and what is known as “gross contamination”: while the first refers to the appearance of one or two alleles in a sample originating from unrelated sources, the second refers instead to multiple alleles originating from a single unknown source (and therefore these alleles are dependent events). In this respect, the authors emphasize that the principle risk of (random) contamination is erroneous exclusion, particularly if the contaminant profile masks that of the perpetrator.

The authors state that in their opinion, the limitations described regarding Low Template – DNA (LT-DNA) must be applied to all methods of DNA typing, since all DNA profiles may potentially be subject to the same phenomena originally attributed to LT-DNA. They also hold that if the due precautions are taken and the Judge is informed about the limitations of the technique used, it is the task of the latter to assess the weight of the evidence. It is not up to the scientist to decide, based on purely arbitrary criteria, whether or not the evidence being examined should be brought to the attention of the Court.

The aforementioned authors stress that the weight of the evidence is a fundamental issue. The evidence can arise in three principal ways: a) in an ‘innocent’ way; b) as a result of the criminal event itself; c) as a result of contamination or involuntary transfer (Gill P., 2002). The widespread public opinion exists that “if the DNA evidence matches the suspect then s/he must be guilty of the crime”, and this opinion unfortunately extends to some scientists, judges and lawyers. In fact there exists a perception that the failure to convict signifies a failure of science: this is extremely dangerous, and it is therefore important to defend the idea that whether or not a suspect is convicted is an irrelevant question for the scientist, whose responsibility must only be to correctly explain the evidence in the context of the specific case in question.

The question as to which was the actual method of transfer of the suspect’s DNA onto the item must therefore be assessed by the judge and not by the scientist, whose principal role is to explain the various possible methods of transfer which exist as well as the relative probabilities associated with each of these (Gill P., 2002).

We report below the “Precautions against contamination” suggested by J.M. Butler, in cases of samples of biological material containing low amounts of DNA.

“The sensitivity of the PCR requires constant attention from the laboratory staff to ensure that contamination does not effect DNA typing. Contamination of the PCR reaction is always a problem, because the technique is very sensitive due to the low amounts of DNA. If not careful, an operative preparing the PCR reaction may inadvertently add his/her own DNA to the reaction. In the same way, the police officer or technician at the crime scene when the evidence is collected may contaminate the sample if adequate precautions are not taken. For this reason, each evidence sample should be taken with clean tweezers or handled with disposable gloves which must be changed frequently.

In order to detect laboratory-induced contamination, everyone in a forensic DNA laboratory must be typed, so that there is a record of all possible contaminant DNA profiles (staff elimination database).

Laboratory staff should be appropriately covered during contact with samples prior to the PCR amplification. Appropriate protection includes lab coats and gloves as well as masks and caps to prevent skin cells or hairs falling into the amplification tubes.

These precautions are particularly important when working with very small quantities of sample or with degraded samples.

Some of the useful precautions for avoiding contamination in the PCR reaction in the laboratory setting are:

- The pre and post-PCR processing areas should be physically separate;

- The instrumentation as well as the tubes and reagents for the PCR should be kept separate from other laboratory instruments, particularly those used for analysis of the PCR product;

- Disposable gloves should be worn and changed frequently;

- The reactions should be prepared under a fume hood, if available;

- Aerosol-resistant tips should be used, and should be changed for each sample in order to prevent cross-contamination during transfer of the liquids;

- The reagents should be prepared carefully to avoid the presence of any contaminant DNA or nucleases;

- Ultra-violet irradiation of the PCR preparation area when the area is not in use, as well as the cleaning of the work area and instrumentation with isopropanol and/or bleach solutions at 10%, help to ensure that extraneous DNA molecules are destroyed before DNA extraction or PCR preparation.

The PCR product may show results which originate from amplified DNA contaminating a sample which has not yet been amplified. Since the amplified DNA is much more concentrated than the unamplified template DNA, the first will be preferentially copied during the PCR, and therefore the unamplified sample will be hidden.

The inadvertent transfer of even a very small volume of PCR amplicon to an unamplified DNA sample may result in the ‘contaminant’ sequence being amplified and detected.

For this reason, the samples being examined are usually worked on in the laboratory before the reference samples of the suspect, in order to avoid any possibility of the evidence being contaminated with the already amplified DNA from the suspect.

3.7 Replicate analyses

The most commonly used approach for the designation of an allele in an LCN sample requires the subdivision of the sample into two or more aliquots, and recording only those alleles which are common to at least two replicates (Gill P. et al., 2000; Gill P., 2001; Gill P. et al., 2007). The advantage of this approach is that if drop-in occurs randomly and infrequently, observing an allele several times increases the reliability that it actually derives from the sample being examined (assuming that no contamination happened during the sampling phase).

Most scientists who work with LCN stress the need to perform 2-3 replications and state that an allele must be observed at least twice to be denominated as such (Taberlet P. et al., 1996, even invoke up to 7 replications to increase the reliability of allele denomination): allele redundancy in replicates is therefore the cornerstone of reliable LCN typing.

However, the exact number of replications, the number of times an allele is observed, and the degree of reliability (quantitatively or qualitatively) need to be better defined.

In practice, it needs to be considered that performing more than 2-3 replications is often not possible; therefore most interpretation guidelines and degree of reliability assertions must be applied and declared based on the analysis of 2-3 replicates.

Common sense would suggest that dividing a sample into multiple aliquots increases the limitations of LCN typing, (Budowle B. et al., 2009) and that every possible effort should be made to concentrate as much template as possible into a single reaction: however, to date, allele redundancy is the only accepted approach.

Studies on dilutions and redundancy have, up to now, been based on laboratory control samples: [these are] completely different to [samples] originating from items from real cases, possessing undetermined (and often degraded) quantities of DNA, and which may contain PCR inhibitors also able to affect allele drop-out.

Therefore, based on these numerous uncertainties, the prompt definition of the data to include in the final report concerning the number of replicates, the degree of reliability related to the uncertainty of the nature of the sample being examined, and its quality, would be desirable.

3.8 Controls

A further issue characteristic of LCN typing is the number and type of control samples which should be used. For example, the number of negative controls which should be analyzed to verify that drop-in is sporadic has not yet been discussed. In addition, what constitutes a positive control needs to be better specified: it should be reasonable that positive controls have the same quantity of DNA as is present in the sample being examined, but in practice this is very difficult [to achieve] since the amount of DNA in a sample is unknown and difficult to approximate in mixed samples. Perhaps a range of template quantity should be proposed (for instance, from 20 pg to 200 pg). If the quantity in a positive control approximates that of an LCN sample, allele drop-in and probably also drop-out will happen. Therefore, if a positive control does not provide a sure result with reliability, the control loses its function. These problems relating to what constitutes an appropriate positive control must be addressed.

3.9 Limitations of LCN Typing

Some of the differences of LCN typing compared to conventional STR typing can affect its utility (Gill P., 2001; Budowle B. et al., 2009).

Due to the small quantities of DNA contained in LCN samples and the extreme sensitivity of the method (due essentially to the “optimization” of the PCR and capillary electrophoresis protocol) levels of “background” DNA as well as DNA from casual contact may be detected; thus profiles possibly emerging from these analyses may not relate to the specific case being examined.

In addition, Budowle B. et al. (2009) assert the need to inform the International Scientific Community of the limitations and problems associated with LCN techniques, so that all those involved in a specific investigative or legal case are aware of the risks and limitations which can affect the results of the investigations. Publicizing the potential of the application of LCN typing without describing its limitations is not a responsible role for the forensic geneticist to take.

In this regard, the essential aspects to remember are the following:

- LCN typing is not a reproducible technique, and this limitation must always be well-emphasized in the reports;

- contamination from handling is possible, and this possibility must always be considered;

- a concentrated sample can provide better results in an analysis than replicates which use allele redundancy for interpretation;

- the number and type of controls used needs to be clearly defined, and to these should be attributed a quantitative or qualitative reliability;

- to date, interpretation guidelines have not been well-defined, and those which exist only relate to samples from a single source; mixture interpretation has not yet been validated;

- contamination or allele drop-in may have different sources;

- due to the increased sensitivity of the method, secondary transfer cannot be excluded as a possible explanation for the results obtained from LCN typing;

- STR kits, reagents and other materials may not have been subjected to effective quality controls to detect possible contamination from extraneous DNA;

- statistical interpretations and supporting data for the calculation of probabilities need to be better defined and developed to address the uncertainty associated with LCN typing;

- as the analysis provides results from very small samples, the material origin of the DNA cannot, to date, be inferred.

According to Budowle B. et al. (2009), LCN typing by its nature cannot be considered a robust method. While efforts regarding the implementation of the use of this method have principally focused on reducing laboratory contamination and on using redundancy for reliability, a more appropriate approach might now be to improve the phases of collection, extraction and PCR.

The approaches to consider include:

- improving collection methodology at the crime scene, and training staff in charge of the investigations there;

- increasing efficiency of recovery and the yield from a collection instrument and/or from the extract to try and increase the amount of template DNA available so that the sample no longer needs to be classified as LCN but can then be analyzed by conventional methods (Budimlija Z.M. et al., 2005; Schiffner L.A. et al., 2005);

- improving the PCR so that stochastic effects are less important with limited quantities of template DNA;

- considering SNPs as genetic markers of primary use for DNA of low and degraded quantity (Budowle B., van Daal A., 2008; Sanchez J.J. et al., 2006);

- improve the quality of the DNA sample (and the quantity of template available) using DNA repair and/or Whole Genome Amplification methods (Smith P.J., Ballantyne J., 2007; Nunez A.N. et al., 2008).

Caragine T. et al. (2009) describe the modifications made to the protocols commonly used in STR analysis to improve the low rate of success associated with LCN typing.

These modifications substantially consist of:

- strengthening the PCR through increasing the number of cycles;

- reducing the reaction volume and doubling the annealing time;

- modifying the capillary electrophoresis, by increasing the injection time and voltage (Bajda

E. et al., 2004).

The authors stress that intensifying the DNA signal with these methods inevitably increases the risk of detecting contamination.

The protection measures advised and used by the authors to reduce contamination to the minimum possible are the following:

- Laboratory staff – who must always wear double gloves, hair coverings, shoe covers, safety glasses and lab coats – must work exclusively in areas dedicated to the analysis of LT-DNA samples or pre-amplification;

- Fume hoods must be set up for each phase of the procedure (Kwok S., Higuchi R., 1989). Disposable gloves must be changed between examining individual items, between one analysis and another, and several times during the preparation of a single sample;

- Workbench surfaces, materials and instruments must be washed first with 10% bleach, then with water and finally with 70% ethanol both before and after each procedure, and also between the analysis of one item and another;

- A thorough weekly cleaning of the entire laboratory and the facilities must also be carried out. All the instruments and the water must be irradiated in a UV radiation cabinet before use;

- Regarding manual processes, it is stressed that only one sample tube must be opened at a time, using a clean tube opener or paper tissue as an alternative;

- Before analyzing samples with capillary electrophoresis, the standard (that used by the authors for this validation study is the GeneScan® 500 LIZ® Size Standard) must be run by itself in order to confirm the absence of extraneous DNA in the capillary;

- All reagents used in the phases of extraction, quantification, amplification and separation must be preemptively tested to confirm the absence of extraneous DNA.

Both the samples under examination and the negative controls used for the experiments illustrated in this work were amplified in triplicate using the AmpFISTR Identifiler® kit according to the manufacturer’s protocol, with the exception of a 2 minute annealing time and a halved reaction volume with 2.5 U of TAQ for 31 PCR cycles. The data was then analyzed with the analysis software GeneScan® and Genotyper® (Applied Biosystems) with a peak height threshold of 75 RFU (Relative Fluorescence Units).

The consensus profile, also called the composite profile, was then generated including all the alleles identified in at least 2 out of 3 replicates.

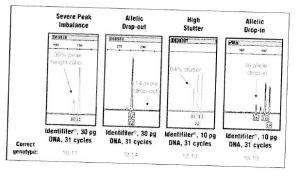

The amplification of LT-DNA samples typically produces stochastic phenomena like allele drop-in and drop-out, peak imbalance at the same locus and an increase in stutter. In the study in question, these effects were shown in DNA quantities equal to and less than 100 pg, and in particular for values below 50 pg. Despite the effects of drop-out for smaller DNA quantities, this method, which provides for the development of the aforementioned parameters, allowed good peak strength to be obtained, often above 1000 RFU.

The experiments carried out further show that, despite the strict application of appropriate protocols to minimize possible sources of drop-in within the laboratory, some spurious alleles in negative controls are nonetheless to be expected.

Recently J.M. Butler and C.R. Hill (2011) also stated that, faced with limited information provided from low amounts of DNA, forensic scientists will continually find themselves needing to address the question of how far to push DNA testing methods. “Low copy number” (LCN) analysis, also known as “low template DNA” (LT-DNA), refers to the enhancing of detection sensitivity, usually through increasing the number of PCR cycles. The stochastic effects associated with this type of analysis are allele or locus drop-out, while increasing the sensitivity of the method increases the potential for contamination or allele drop-in. Validation studies conducted with replicate testing of low amounts of DNA were therefore performed to establish the threshold of allele drop-out and drop-in, using 10, 30 and 100 pg with different commercial STR typing kits, using both a standard and increased number of cycles. The results obtained with heterozygous samples demonstrate that a replicate testing approach provides reliable results with single source samples, if a consensus profile has been created. Due to the limited nature of the biological samples that may be recovered from traces present at a crime scene, decisions often need to be made about whether or not to proceed with testing low amounts of DNA. Just as for any scientific measurement, validation helps to define the limits of the techniques used. In the case of the validation of forensic DNA testing, sensitivity studies, in which control DNA samples are diluted to low amounts, allow a laboratory to establish at which point a detection technique is no longer able to provide reliable results. The authors maintain that repeated testing of replicated aliquots of the same diluted control DNA sample allows the reproducibility of the results to be evaluated. However when increasing the sensitivity of the testing method by increasing the number of PCR cycles, the stochastic effects can become more evident.

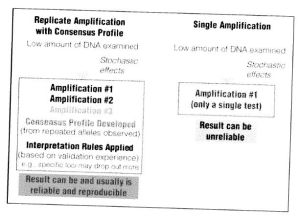

Figure 2. Stochastic effects which happen randomly during the amplification of low amounts of DNA with an increased number of PCR cycles.

While stochastic effects always occur if low quantities of DNA are subjected to PCR (Figure 2), a replicate amplification approach with the development of a consensus profile from the repeated alleles can provide reliable results (Figure 3). However, amplification results from a single analysis can be unreliable due to allele drop-in or drop-out, as previously noted. Individual results can also vary between replicates, but a combined consensus profile can provide an accurate answer if the repeated alleles are reported.

Every laboratory finds itself needing to confront the analysis of samples with low amounts of DNA. Laboratories and technical staff must decide whether or not to try an “enhanced interrogation technique” such as an increase in the number of cycles, desalination of the samples or an increase in the capillary electrophoresis injection. If such an approach is applied, validation studies need to be performed to develop appropriate interpretation guidelines and to establish the degree of variation which can be expected when low amounts of DNA are analyzed. Deciding when to stop the testing or the interpretation of results can be difficult. Some laboratories interrupt the testing based on a certain amount of starting DNA, using the validation data to establish a quantification threshold. Others use stochastic thresholds which are utilized during data interpretation to decide which STR typing results are reliable (for example, where one would not expect to find allele drop-out at that particular locus). The authors conclude by affirming that performing similar experiments to those described in this article can help to determine an appropriate stochastic threshold. Figure 3. Comparison between different approaches when analyzing low amounts of DNA. Replicate amplification with development of a consensus profile and the application of interpretation rules based on validation experience can produce reliable results.

Figure 3. Comparison between different approaches when analyzing low amounts of DNA. Replicate amplification with development of a consensus profile and the application of interpretation rules based on validation experience can produce reliable results.

Only a few months ago, some scientists (“Publications and letters related to the forensic genetic analysis of low amounts of DNA”, Forensic Science International: Genetics 5 (2011) 1-5, Editorial) stressed the fact that, particularly over the last two years, numerous publications, communications and letters in numerous scientific journals addressed the subject of the scientific, technical and legal problems associated with the methods and protocols of LCN typing. The sudden increase in publications relating to this subject was triggered by two important legal cases, in the United Kingdom and the United States respectively (The Queen v Sean Hoey. Neutral Citation Number [2007] NICC 49; The People v. Hemant Megnath, Ind. No. 917/2007, Frye Hearing, 2010). These triggered a heated debate on the question of whether the typing of low amounts of DNA using modified laboratory procedures such as increasing the number of PCR cycles, post-PCR purification methods and/or increased injection conditions for capillary electrophoresis have been sufficiently validated by the laboratories which apply them in routine cases. Furthermore, additional problems raised concerned the fact that adequate and generally accepted approaches for the biostatistical interpretation of results obtained from such modified protocols are still not available, although the basic idea of using a greater number of PCR cycles for more difficult samples was published more than a decade ago (Gill P. et al., 2000; Whitaker J.P. et al., 2001). The scientific journal in question stated that it had accepted numerous letters to the Editor during the last year with the intention of ensuring a free exchange of opinion, but that it had finally been forced to halt this series of exchanges since, rather than attempting to bring new direction or knowledge to the subject, they were limited to provocative statements and sterile criticisms. However, the Editors of the journal invite the sending of work relating to original scientific research which can provide new information about the validation and interpretation of the results obtained from DNA typing of low quantity and/or quality.

Finally, for completeness, it is considered appropriate to recount the methods by which Exhibit 36 was acquired.

From the record of the Court of Assizes hearing on 28/02/2009 (page 175), Inspector Armando Finzi, in service at the Squadra Mobile, was asked about the search of Raffaele Sollecito’s house: “Were you wearing ordinary clothing or did you take precautions?” A: “We were all in ordinary clothes, obviously before entering inside the building all of us put on gloves and footwear”.

It is not clear who collected the knife: “(Court of Assizes hearing on 27/02/2009, page 158) Marco Chiacchiera: “I took it, put it inside the envelope, closed it, sealed it, and took it back to the police station”. Armando Finzi: “It was the first object which I took, I turned and I showed it to Dr. Chiacchiera, saying to him that in my opinion we had to take this knife because after investigative intuition…” (Court of Assizes, hearing on 28/02/2009, page 178) and it was placed inside a “new envelope where I keep the gloves, the new gloves” “…I opened the envelope and I put it inside an envelope similar to this one, after which I put it inside the folder and I closed it and I continued the search” (Armando Finzi, Court of Assizes, hearing on 28/02/2009, page 179); Q: “Well then we can clarify, you inserted the knife which you found into a paper envelope similar to that one and you sealed it with tape, it’s correct to say this?” A: “Yes, not there on the spot, in the police station, after doing the paperwork I sealed it with a piece of tape, but it wasn’t properly sealed, there were two gaps, I closed the two gaps so as not to leave the envelope open” (Court of Assizes, hearing on 28/02/2009, page 190).

From the documents, there is a further handover of exhibit 36 in the police station (Court of Assizes hearing on 28/02/2009, page 202): Stefano Gubbiotti: “I took the knife and I put it inside the…” Q: “You removed it from the envelope with gloves?” A: I removed it from the envelope with gloves because the volume of the envelope did not allow me to put it inside this box”. “And I put it in the box and I catalogued it, then I closed it with tape”.

Leave a comment